IntroductionI have searched on Google and found that Hadoop provides native Windows support from version 2.2 and above, but for that we need to build it on our own, as official Apache Hadoop releases do not provide native Windows binaries. So this tutorial aims to provide a step by step guide to Build Hadoop binary distribution from Hadoop source code on Windows OS. This article will also provide instructions to setup Java, Maven, and other required components. Apache Hadoop is an open source Java project, mainly used for distributed storage and large data processing. It is designed to scale horizontally on the go and to support distributed processing on multiple machines. You can find more about Hadoop atAuthor's GitHubI have created a bunch of Spark-Scala utilities at, might be helpful in some other cases. Solution for Spark ErrorsMany of you may have tried running Spark on Windows OS and faced an error in the console (shown below).

This is because your Hadoop distribution does not contain native binaries for Windows OS, as they are not included in the official Hadoop Distribution. So you need to build Hadoop from its source code on your Windows OS. 16/04/02 19:59:31 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform. Using builtin-java classes where applicable16/04/02 19:59:31 ERROR Shell: Failed to locate the winutils binary in the hadoop binary pathjava.io.IOException: Could not locate executable nullbinwinutils.exe in the Hadoop binaries. Solution for Hadoop ErrorThis error is also related to the Native Hadoop Binaries for Windows OS. So the solution is the same as the above Spark problem, in that you need to build it for your Windows OS from Hadoop's source code.

Winutils Hadoop 2.7

DownloadHadoop is an open-source software environment of The Apache Software Foundation that allows applications petabytes of unstructured data in a cloud environment on commodity hardware can handle. Because the system is based on Google's MapReduce and Google File System (GFS), large data sets into smaller data blocks are divided so that a cluster parallel processing.Hadoop works with a distributed file system (HDFS) what makes that data on multiple nodes and aggregated with a high bandwidth a cluster can be treated.Given the fact that Hadoop is relatively new for many organizations, it is important to see how these 'early adopters' use of Hadoop. Despite the fact that Hadoop is in its early stages, and that there are constant projects to be started that the range of uses of Hadoop expand, we still see all new patterns arise. Let these real-life situations to look at from both a technical and a business perspective.Hadoop screenshotsYou can free download Hadoop and safe install the latest trial or new full version for Windows 10 (x32, 64 bit, 86) from the official site.Devices: Desktop PC, Laptop (ASUS, HP, DELL, Acer, Lenovo, MSI), UltrabookOS: Professional, Enterprise, Education, Home Edition, versions: 1507, 1511, 1607, 1703, 1709, 1803, 1809 Categories.

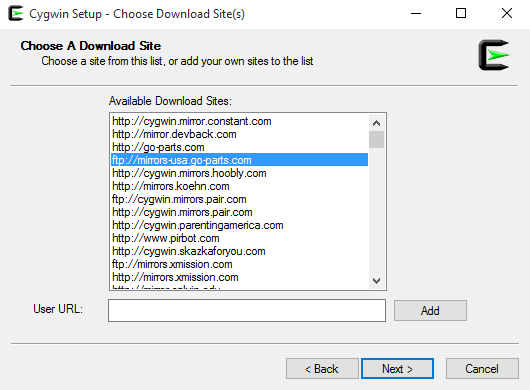

HBase uses the./conf/hbase-default.xml file for configuration. Some properties do not resolve to existing directories because the JVM runs on Windows. This is the major issue to keep in mind when working with Cygwin: within the shell all paths are.nix-alike, hence relative to the root /. However, every parameter that is to be consumed within the windows processes themself, need to be Windows settings, hence C:-alike. Change following propeties in the configuration file, adjusting paths where necessary to conform with your own installation:. hbase.rootdir must read e.g. File:///C:/cygwin/root/tmp/hbase/data or hdfs://127.0.0.1:9000/hbase in case of hadoop file system.

Winutils Exe Download

hbase.tmp.dir must read C:/cygwin/root/tmp/hbase/tmp. hbase.zookeeper.quorum must read 127.0.0.1 because for some reason localhost doesn't seem to resolve properly on Cygwin.Make sure the configured hbase.rootdir and hbase.tmp.dir directories exist and have the proper rights set up e.g.

By issuing a chmod 777 on them.Go to c:/cygwin/root/usr/local/hbase-1.2.3/conf and add the following in hbase-site.xml file. Now, lets play with some hbase commands.We’ll start with a basic scan that returns all columns in the cars table. Using a long column family name, such as columnfamily1 is a horrible idea in production.

Every cell (i.e. Every value) in HBase is stored fully qualified.

This basically means that long column family names will balloon the amount of disk space required to store your data. In summary, keep your column family names as small as possibleTo start, I’m going to create a new table named cars. My column family is vi, which is an abbreviation of vehicle information.The schema that follows below is only for illustration purposes, and should not be used to create a production schema. In production, you should create a Row ID that helps to uniquely identify the row, and that is likely to be used in your queries. Therefore, one possibility would be to shift the Make, Model and Year left and use these items in the Row ID.create 'cars', 'vi'. If this script asks to overwrite an existing /etc/sshconfig, answer yes. If this script asks to overwrite an existing /etc/sshdconfig, answer yes.

Winutils Exe Hadoop Download For Windows Xp

If this script asks to use privilege separation, answer yes. If this script asks to install sshd as a service, answer yes.

Make sure you started your shell as Adminstrator!. If this script asks for the CYGWIN value, just as the default is ntsec. If this script asks to create the sshd account, answer yes. If this script asks to use a different user name as service account, answer no as the default will suffice. If this script asks to create the cygserver account, answer yes. Enter a password for the account. Start the SSH service using net start sshd or cygrunsrv -start sshd.

Notice that cygrunsrv is the utility that make the process run as a Windows service. Confirm that you see a message stating that the CYGWIN sshd service was started succesfully.Harmonize Windows and Cygwin64$ mkpasswd -cl /etc/passwd$ mkgroup -local /etc/groupTest SSH using another Cygwin64 terminal Local User:$ ssh cygserver@localhostcygserver@localhost's password:cygserver@Naveen $ logout.

Improve the transparency and efficiency of your water business with UtilityBilling, a trusted utility billing software. UtilityBilling is a comprehensive solution designed to eliminate hassle and ineffective manual processes in water utilities.

It automates daily tasks so operations run efficiently. The platform includes a complete billing solution, bulk invoice generation, powerful CRM, customer portal, and automatic bill and collections notices. UtilityBilling also runs on any browser, anywhere.